This article is part of a series on how to setup a bare-metal CI system for Linux driver development. Here are the different articles so far:

- Part 1: The high-level view of the whole CI system, and how to fully control test machines remotely (power on, OS to boot, keyboard/screen emulation using a serial console);

- Part 2: A comparison of the different ways to generate the rootfs of your test environment, and introducing the boot2container project.

In this article, we will further discuss the role of the CI gateway, and which steps we can take to simplify its deployment, maintenance, and disaster recovery.

This work is sponsored by the Valve Corporation.

Requirements for the CI gateway

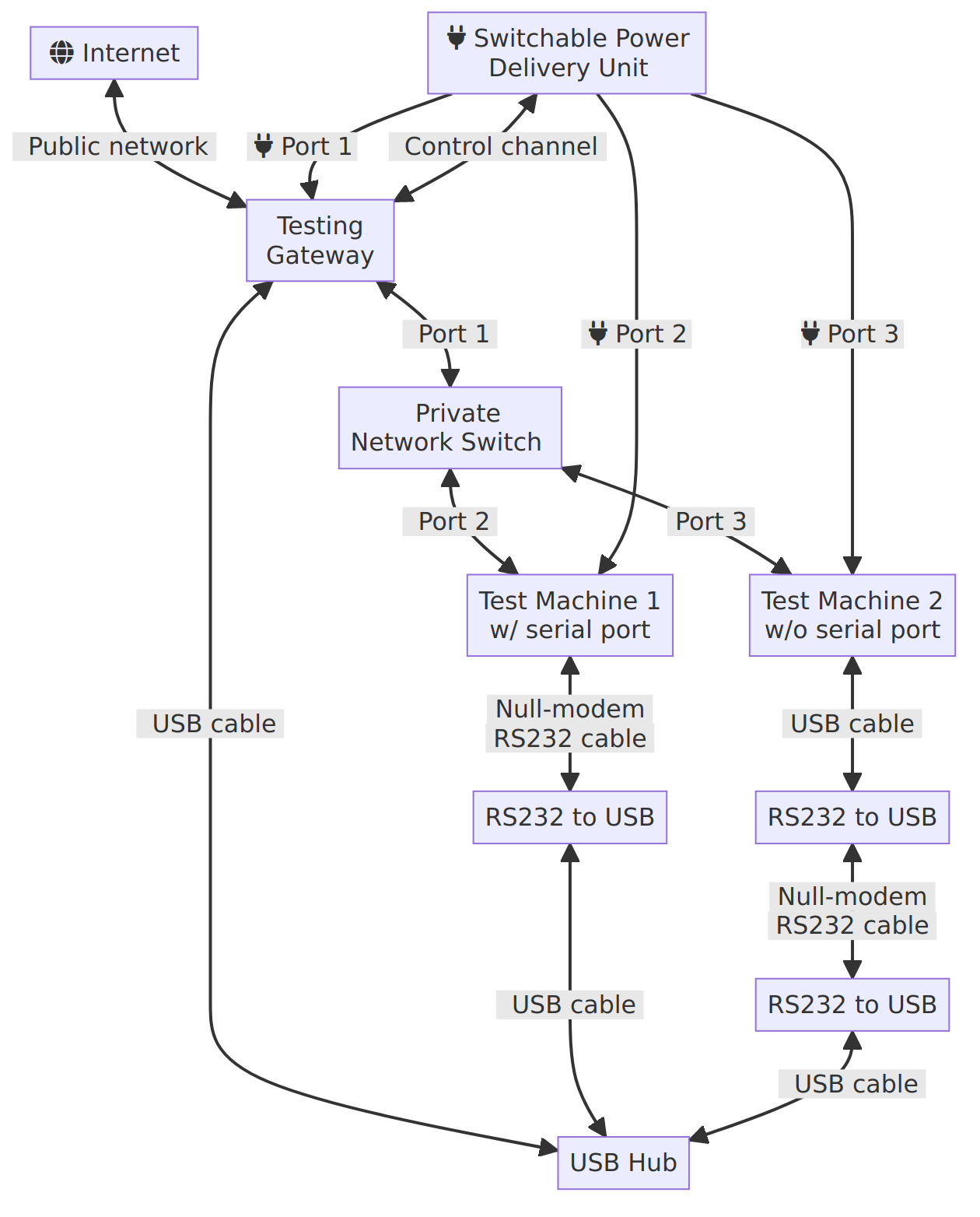

As seen in the part 1 of this CI series, the testing gateway is sitting between the test machines and the public network/internet:

The testing gateway’s role is to expose the test machines to the users, either directly or via GitLab/Github. As such, it will likely require the following components:

- a host Operating System;

- a config file describing the different test machines;

- a bunch of services to expose said machines and deploy their test environment on demand.

Since the gateway is connected to the internet, both the OS and the different services needs to be be kept updated relatively often to prevent your CI farm from becoming part of a botnet. This creates interesting issues:

- How do we test updates ahead of deployment, to minimize downtime due to bad updates?

- How do we make updates atomic, so that we never end up with a partially-updated system?

- How do we rollback updates, so that broken updates can be quickly reverted?

These issues can thankfully be addressed by running all the services in a container (as systemd units), started using boot2container. Updating the operating system and the services would simply be done by generating a new container, running tests to validate it, pushing it to a container registry, rebooting the gateway, then waiting while the gateway downloads and execute the new services.

Using boot2container does not however fix the issue of how to update the kernel or boot configuration when the system fails to boot the current one. Indeed, if the kernel/boot2container/kernel command line are stored locally, they can only be modified via an SSH connection and thus require the machine to always be reachable, the gateway will be bricked until an operator boots an alternative operating system.

The easiest way not to brick your gateway after a broken update is to power it through a switchable PDU (so that we can power cycle the machine), and to download the kernel, initramfs (boot2container), and the kernel command line from a remote server at boot time. This is fortunately possible even through the internet by using fancy bootloaders, such as iPXE, and this will be the focus of this article!

Tune in for part 4 to learn more about how to create the container.

iPXE + boot2container: Netbooting your CI infrastructure from anywhere

iPXE is a tiny bootloader that packs a punch! Not only can it boot kernels from local partitions, but it can also connect to the internet, and download kernels/initramfs using HTTP(S). Even more impressive is the little scripting engine which executes boot scripts instead of declarative boot configurations like grub. This enables creating loops, endlessly trying to boot until one method finally succeeds!

Let’s start with a basic example, and build towards a production-ready solution!

Netbooting from a local server

In this example, we will focus on netbooting the gateway from a local HTTP

server. Let’s start by reviewing a simple script that makes iPXE acquire an IP

from the local DHCP server, then download and execute another iPXE script from

http://<ip of your dev machine>:8000/boot/ipxe.

If any step failed, the script will be restarted from the start until a successful

boot is achieved.

#!ipxe

echo Welcome to Valve infra's iPXE boot script

:retry

echo Acquiring an IP

dhcp || goto retry # Keep retrying getting an IP, until we get one

echo Got the IP: $${netX/ip} / $${netX/netmask}

echo

echo Chainloading from the iPXE server...

chain http://<ip of your dev machine>:8000/boot.ipxe

# The boot failed, let's restart!

goto retry

Neat, right? Now, we need to generate a bootable ISO image starting iPXE with the above script run as a default. We will then flash this ISO to a USB pendrive:

$ git clone git://git.ipxe.org/ipxe.git

$ make -C ipxe/src -j`nproc` bin/ipxe.iso EMBED=<boot script file>

$ sudo dd if=ipxe/src/bin/ipxe.iso of=/dev/sdX bs=1M conv=fsync status=progress

Once connected to the gateway, ensure that you boot from the pendrive, and you

should see iPXE bootloader trying to boot the kernel, but failing to download

the script from

http://<ip of your dev machine>:8000/boot.ipxe. So, let’s write one:

#!ipxe

kernel /files/kernel b2c.container="docker://hello-world"

initrd /files/initrd

boot

This script specifies the following elements:

- kernel: Download the kernel at

http://<ip of your dev machine>:8000/files/kernel, and set the kernel command line to ask boot2container to start thehello-worldcontainer - initrd: Download the initramfs at

http://<ip of your dev machine>:8000/files/initrd - boot: Boot the specified boot configuration

Assuming your gateway has an architecture supported by boot2container, you may now download the kernel and initrd from boot2container’s releases page. In case it is unsupported, create an issue, or a merge request to add support for it!

Now that you have created all the necessary files for the boot, start the web server on your development machine:

$ ls

boot.ipxe initrd kernel

$ python -m http.server 8080

Serving HTTP on 0.0.0.0 port 8000 (http://0.0.0.0:8000/) ...

<ip of your gateway> - - [09/Jan/2022 15:32:52] "GET /boot.ipxe HTTP/1.1" 200 -

<ip of your gateway> - - [09/Jan/2022 15:32:56] "GET /kernel HTTP/1.1" 200 -

<ip of your gateway> - - [09/Jan/2022 15:32:54] "GET /initrd HTTP/1.1" 200 -

If everything went well, the gateway should, after a couple of seconds, start downloading the boot script, then the kernel, and finally the initramfs. Once done, your gateway should boot Linux, run docker’s hello-world container, then shut down.

Congratulations for netbooting your gateway! However, the current solution has one annoying constraint: it requires a trusted local network and server because we are using HTTP rather than HTTPS… On an untrusted network, a man in the middle could override your boot configuration and take over your CI…

If we were using HTTPS, we could download our boot script/kernel/initramfs directly from any public server, even GIT forges, without fear of any man in the middle! Let’s try to achieve this!

Netbooting from public servers

In the previous section, we managed to netboot our gateway from the local network. In this section, we try to improve on it by netbooting using HTTPS. This enables booting from a public server hosted at places such as Linode for $5/month.

As I said earlier, iPXE supports HTTPS. However, if you are anyone like me, you may be wondering how such a small bootloader could know which root certificates to trust. The answer is that iPXE generates an SSL certificate at compilation time which is then used to sign all of the root certificates trusted by Mozilla (default), or any amount of certificate you may want. See iPXE’s crypto page for more information.

WARNING: iPXE currently does not like certificates exceeding 4096 bits. This can be a limiting factor when trying to connect to existing servers. We hope to one day fix this bug, but in the mean time, you may be forced to use a 2048 bits Let’s Encrypt certificate on a self-hosted web server. See our issue for more information.

WARNING 2: iPXE only supports a limited amount of ciphers. You’ll need to make

sure they are listed in nginx’s ssl_ciphers configuration:

AES-128-CBC:AES-256-CBC:AES256-SHA256 and AES128-SHA256:AES256-SHA:AES128-SHA

To get started, install NGINX + Let’s encrypt on your server, following your

favourite tutorial, copy the boot.ipxe, kernel, and initrd files to the root

of the web server, then make sure you can download them using your browser.

With this done, we just need to edit iPXE’s general config C header to enable HTTPS support:

$ sed -i 's/#undef\tDOWNLOAD_PROTO_HTTPS/#define\tDOWNLOAD_PROTO_HTTPS/' ipxe/src/config/general.h

Then, let’s update our boot script to point to the new server:

#!ipxe

echo Welcome to Valve infra's iPXE boot script

:retry

echo Acquiring an IP

dhcp || goto retry # Keep retrying getting an IP, until we get one

echo Got the IP: $${netX/ip} / $${netX/netmask}

echo

echo Chainloading from the iPXE server...

chain https://<your server>/boot.ipxe

# The boot failed, let's restart!

goto retry

And finally, let’s re-compile iPXE, reflash the gateway pendrive, and boot the gateway!

$ make -C ipxe/src -j`nproc` bin/ipxe.iso EMBED=<boot script file>

$ sudo dd if=ipxe/src/bin/ipxe.iso of=/dev/sdX bs=1M conv=fsync status=progress

If all went well, the gateway should boot and run the hello world container once again! Let’s continue our journey by provisioning and backup’ing the local storage of the gateway!

Provisioning and backups of the local storage

In the previous section, we managed to control the boot configuration of our gateway via a public HTTPS server. In this section, we will improve on that by provisioning and backuping any local file the gateway container may need.

Boot2container has a nice feature that enables you to create a volume, and provision it from a bucket in a S3-compatible cloud storage, and sync back any local change. This is done by adding the following arguments to the kernel command line:

b2c.minio="s3,${s3_endpoint},${s3_access_key_id},${s3_access_key}": URL and credentials to the S3 serviceb2c.volume="perm,mirror=s3/${s3_bucket_name},pull_on=pipeline_start,push_on=changes,overwrite,delete": Create apermpodman volume, mirror it from the bucket${s3_bucket_name}when booting the gateway, then push any local change back to the bucket. Delete or overwrite any existing file when mirroring.b2c.container="-ti -v perm:/mnt/perm docker://alpine": Start an alpine container, and mount thepermcontainer volume to/mnt/perm

Pretty, isn’t it? Provided that your bucket is configured to save all the revisions of every file, this trick will kill three birds with one stone: initial provisioning, backup, and automatic recovery of the files in case the local disk fails and gets replaced with a new one!

The issue is that the boot configuration is currently open for everyone to see, if they know where to look for. This means that anyone could tamper with your local storage or even use your bucket to store their files…

Securing the access to the local storage

To prevent attackers from stealing our S3 credentials by simply pointing their web browser to the right URL, we can authenticate incoming HTTPS requests by using an SSL client certificate. A different certificate would be embedded in every gateway’s iPXE bootloader and checked by NGINX before serving the boot configuration for this precise gateway. By limiting access to a machine’s boot configuration to its associated client certificate fingerprint, we even prevent compromised machines from accessing the data of other machines.

Additionally, secrets should not be kept in the kernel command line, as any

process executed on the gateway could easily gain access to it by reading

/proc/cmdline. To address this issue, boot2container has a

b2c.extra_args_url argument to source additional parameters from this URL.

If this URL is generated every time the gateway is downloading its boot

configuration, can be accessed only once, and expires soon after being created,

then secrets can be kept private inside boot2container and not be exposed to

the containers it starts.

Implementing these suggestions in a blog post is a little tricky, so I suggest you check out valve-infra’s ipxe-boot-server component for more details. It provides a Makefile that makes it super easy to generate working certificates and create bootable gateway ISOs, a small python-based web service that will serve the right configuration to every gateway (including one-time secrets), and step-by-step instructions to deploy everything!

Assuming you decided to use this component and followed the README, you should then configure the gateway in this way:

$ pwd

/home/ipxe/valve-infra/ipxe-boot-server/files/<fingerprint of your gateway>/

$ ls

boot.ipxe initrd kernel secrets

$ cat boot.ipxe

#!ipxe

kernel /files/kernel b2c.extra_args_url="${secrets_url}" b2c.container="-v perm:/mnt/perm docker://alpine" b2c.ntp_peer=auto b2c.cache_device=auto

initrd /files/initrd

boot

$ cat secrets

b2c.minio="bbz,${s3_endpoint},${s3_access_key_id},${s3_access_key}" b2c.volume="perm,mirror=bbz/${s3_bucket_name},pull_on=pipeline_start,push_on=changes,overwrite,delete"

And that’s it! We finally made it to the end, and created a secure way to provision our CI gateways with the wanted kernel, Operating System, and even local files!

When Charlie Turner and I started designing this system, we felt it would be a clean and simple way to solve our problems with our CI gateways, but the implementation ended up being quite a little trickier than the high-level view… especially the SSL certificates! However, the certainty that we can now deploy updates and fix our CI gateways even when they are physically inaccessible from us (provided the hardware and PDU are fine) definitely made it all worth it and made the prospect of having users depending on our systems less scary!

Let us know how you feel about it!

Conclusion

In this post, we focused on provisioning the CI gateway with its boot configuration, and local files via the internet. This drastically reduces the risks that updating the gateway’s kernel would result in an extended loss of service, as the kernel configuration can quickly be reverted by changing the boot config files which is served from a cloud service provider.

The local file provisioning system also doubles as a backup, and disaster recovery system which will automatically kick in in case of hardware failure thanks to the constant mirroring of the local files with an S3-compatible cloud storage bucket.

In the next post, we will be talking about how to create the infra container, and how we can minimize down time during updates by not needing to reboot the gateway.

That’s all for now, thanks for making it to the end!